Shihao Li

I am a third-year Ph.D. student in Mechanical Engineering at The University of Texas at Austin, advised by Dr. Dongmei Chen in the Advanced Power Systems and Controls Lab. My research focuses on control theory, optimization, reinforcement learning, and robotics.

I am currently exploring applications in robot learning and embodied AI, with hands-on experience deploying vision-language-action models. My work spans deep reinforcement learning for sequential decision-making, LLM-based multi-agent frameworks for automated control synthesis, and distributionally robust predictive control.

I received my B.S. in Mechanical Engineering from Pennsylvania State University in 2023, and my M.S. in Mechanical Engineering from UT Austin in 2025.

Research Interests

- Robot Learning & Embodied AI: Vision-language-action models, visuomotor policy learning

- Deep Reinforcement Learning: Curriculum learning, policy optimization for sequential decision-making

- LLM-Based Control Synthesis: Multi-agent LLM systems for certified controller design

- Control & Optimization: Model predictive control, distributionally robust optimization, system identification

News

- [Feb 2025] Two papers accepted to NAMRC 54! See you at Penn State this summer — excited to return to my alma mater!

- [Jan 2026] Paper on repetitive learning MPC for roll-to-roll manufacturing accepted to ACC 2026. See you in New Orleans this summer!

- [2025] Paper on MDR-DeePC accepted to MECC 2025.

- [2025] Paper on Robust Optimal Task Planning to Maximize Battery Life accepted to MECC 2025.

- [2025] Paper on adhesion dynamics in roll-to-roll lamination published in Manufacturing Letters.

Selected Research Highlights

Developed S2C, a multi-agent LLM framework that synthesizes certified H∞ controllers via convex optimization with provable safety guarantees. Achieved 100% synthesis success on 14 COMPleib benchmarks.

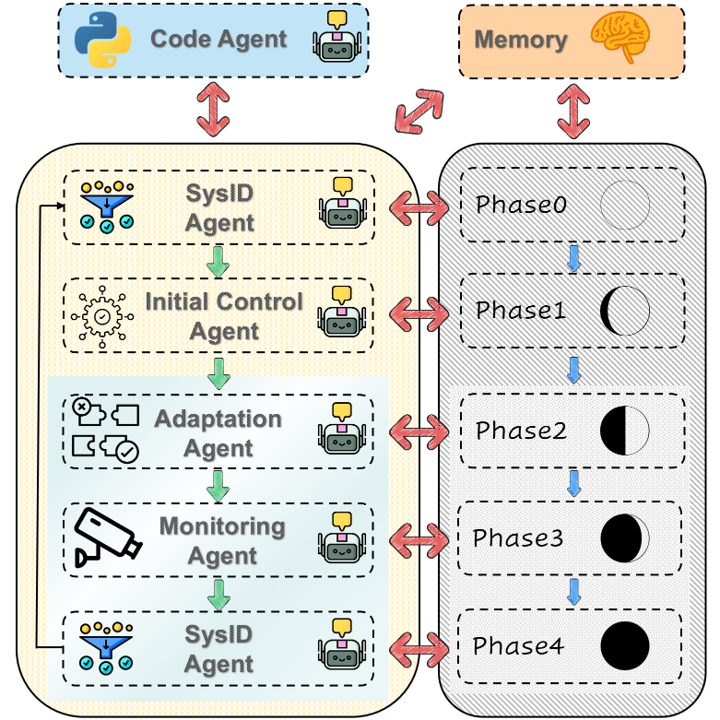

Developed a lifecycle multi-agent framework automating control engineering for roll-to-roll manufacturing—from system identification to sim-to-real adaptation. Achieved robust tension regulation under 50% model uncertainty with safety-guaranteed deployment.

A control framework that gives machines "muscle memory." By updating internal linear models with data from previous cycles, RLMPC reduced tracking error by 64% in roll-to-roll manufacturing while maintaining real-time 3ms computation.

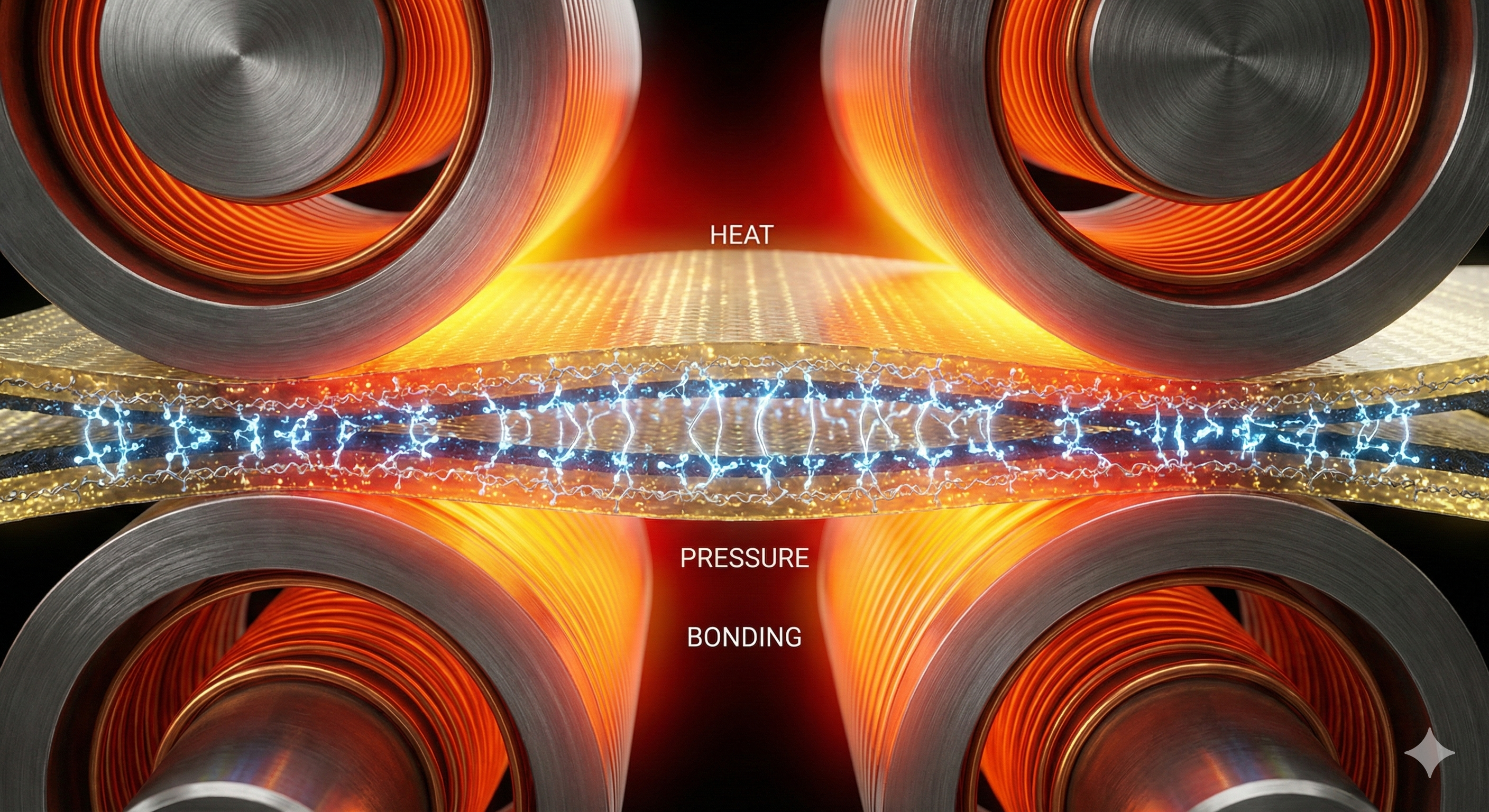

A first-principles process model integrating Hertzian contact theory and transient heat transfer to predict adhesion quality. Identified a dual-heating strategy that reduces process time by 62.6%, enabling significant throughput increases for flexible electronics manufacturing.

Extending datamodels from machine learning to Model Predictive Path Integral control. Achieved 5× sample reduction (2000→400 samples) while improving obstacle avoidance safety through learned influence prediction.

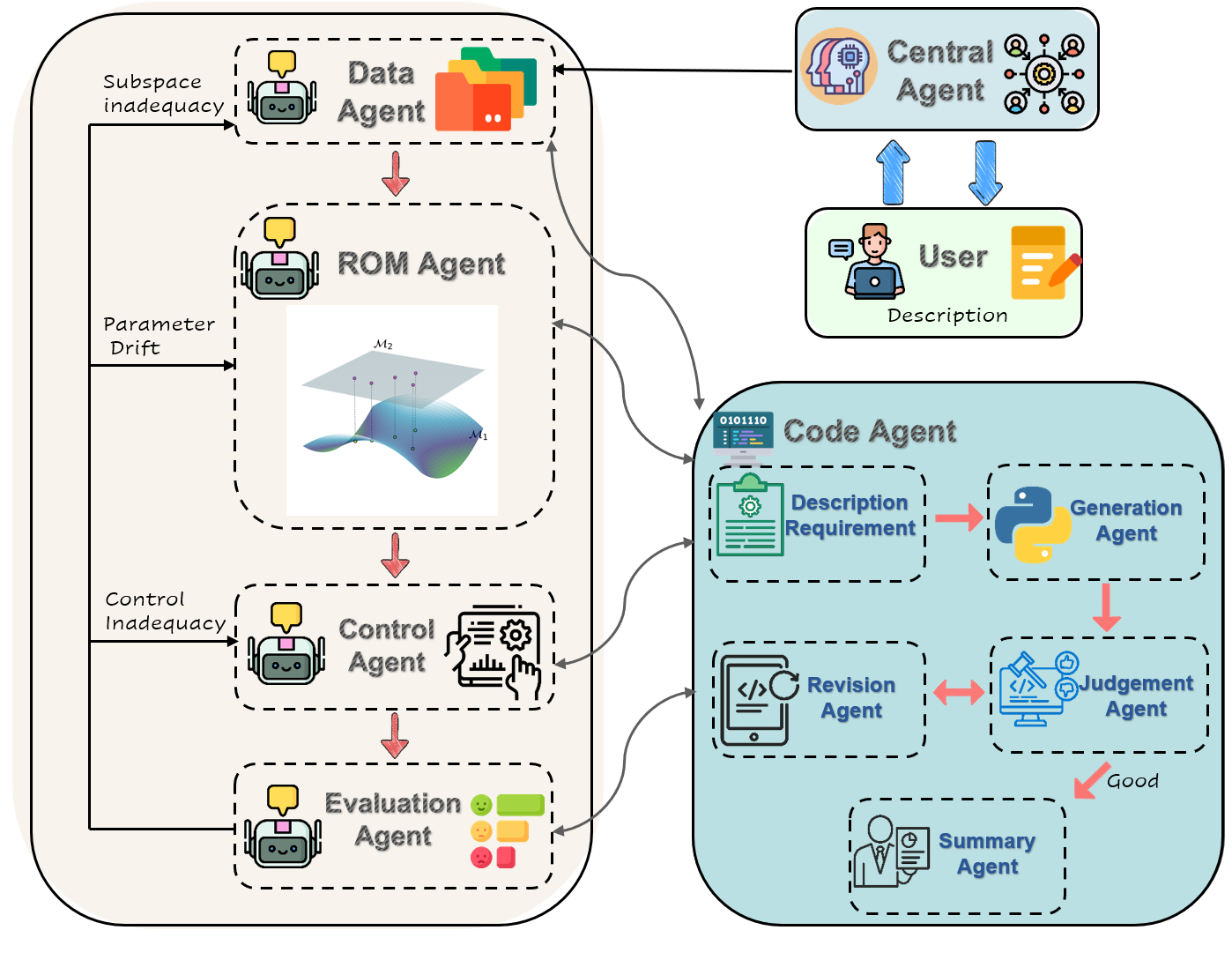

An autonomous framework that maintains control of complex high-dimensional systems. AURORA continuously monitors performance and automatically rebuilds Reduced Order Models (ROMs) and retunes controllers when system dynamics drift, achieving a 100% success rate on dynamic benchmarks.

Coursework

| Category | Course |

|---|---|

| Control Systems | Linear Systems Analysis |

| Multivariable Control Systems | |

| Nonlinear Control Systems | |

| Automatic Control System Design | |

| Optimal Control Theory | |

| Model Predictive Control | |

| Modeling of Physical Systems | |

| Optimization | Linear Programming |

| Nonlinear Programming | |

| Convex Optimization | |

| Machine Learning & AI | Deep Reinforcement Learning |

| Machine Learning | |

| Robotics & Estimation | State Estimation and Localization |

| Mathematics | Real Analysis |

| Functional Analysis | |

| Introduction to Topology | |

| Linear Algebra | |

| Stochastic Processes | |

| Dynamics | Dynamics of Mechanical Systems |

Contact

- Email: shihaoli01301@utexas.edu

- LinkedIn: Shihao Li

- GitHub: SheeHow